|

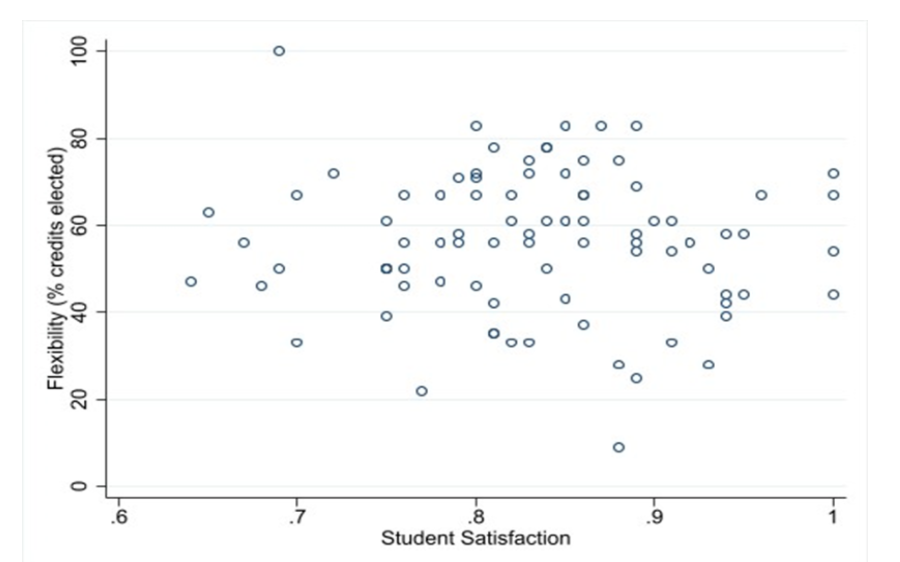

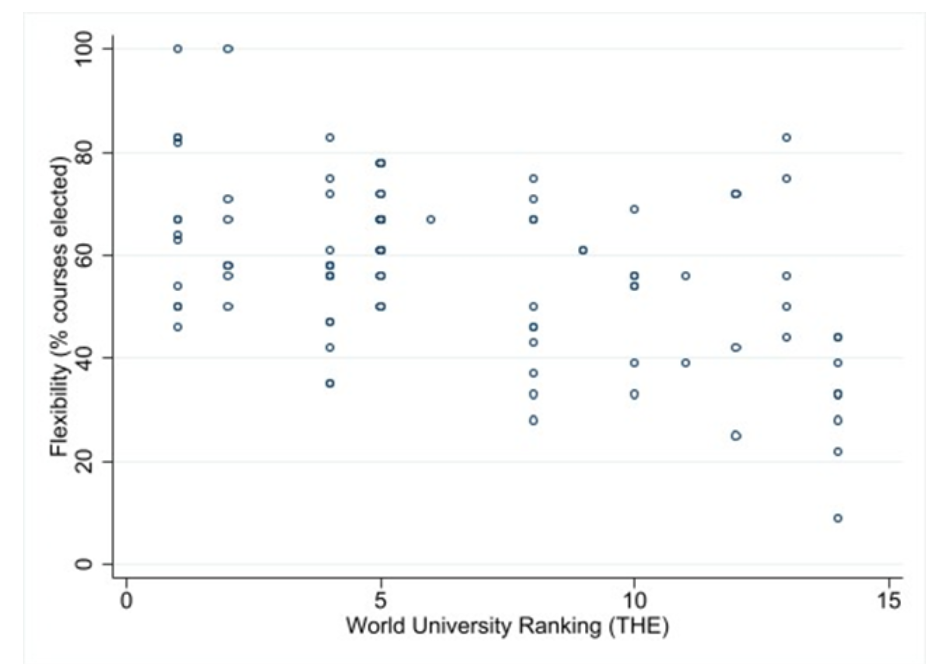

Co-authored with Talischa Schilder and Johan Adriaensen, originally published by Wonkhe on 9 March 2022. The Covid crisis has both highlighted and challenged the marketisation of universities. Students have gone on rent strikes demanding a reduction of their tuition fees – as customers, they are not satisfied with the service that they have paid for. An important aspect of the marketisation of universities is how these institutions generate their income. After reforms by David Cameron’s cabinet, government funding has become principally linked to the number of admitted students, further raising the bar of tuition fees. Consequently, UK universities compete on the education “market” for a higher number of enrolled students or a quality premium for their services to generate more income. So what may help to entice student-customers to pay such high fees? Have it your way Many argue that the incorporation of elective or module choice into a programme is a strategy that will attract a higher number of enrolled students. The thinking goes that students are responsible for their learning experience and are thought to be rational-thinking individuals, capable of choosing what suits them best. In this framing, freedom of choice creates a sense of autonomy that attracts student-customers. In other words, the student is the customer, and the customer is king. Curriculum flexibility is supposed to not only raise student satisfaction ratings, but also an institution’s brand strength, ranking and reputation. These factors, in turn, boost the number of student applications and ultimately the institution’s revenue stream. But is that how it works in practice? Back here in the real world We have analysed ninety-three undergraduate programmes in Political Science and International Relations across the UK. In the figure below, we have plotted the programmes’ flexibility (percentage of credits that are electives) against student satisfaction as measured by the National Student Survey (NSS) UK. If there was a connection, we might expect to see data points clustered around a linear upward-sloping graph, but the data is scattered. We acknowledge the widely voiced criticism on the validity of NSS metrics, in particular the Teaching Excellence Framework (TEF). However, the theory holds that such statistics are quintessential in the public construction of reputation and brand name. We can conclude that curriculum flexibility does not increase student satisfaction nor TEF ratings. Another important feature of the marketisation of universities is the focus on rankings and reputation as integral to the institution’s brand. Such statistics can serve as a quality guarantee to potential students thereby directing their choice of university. In theory, older and higher-ranking universities are less exposed to the workings of the free market because their strong brand generates a steady influx of students along with external funding regardless of any marketing strategy. The hypothesis would then be that younger and lower-ranking universities offer a higher degree of flexibility in their undergraduate programmes to attract more students. Upside down But in reality, our research indicates that the higher-ranking universities lean towards a free-elective system. It doesn’t matter if we select the QS Global Ranking, the Times Higher Education World Ranking or the rankings in the Guardian League Table 2020 – lower-ranking universities with a weaker brand name offer relatively rigid undergraduate programmes in comparison to the elite institutions. How do we explain these contrasting results? Older and higher-ranking universities are known to enjoy larger financial resources. Therefore, these institutions are able to provide a study programme with more free electives and specialisation courses in comparison to younger and lower-ranking universities. Deeper pockets enable a higher staff – student ratio. It enables senior academics to teach electives on their field of expertise, leaving the prescribed subjects to the teaching assistants. Within the academic debate, curriculum flexibility is associated with the marketisation of universities, which could lead to the pursuit of revenue as primary interest at the cost of the quality guaranteed in a prescribed curriculum. However, our research suggests that the incorporation of elective / optional courses / modules into undergraduate programmes is better understood as a premium, “luxury” service.

While “develop your own curriculum” is a catchphrase on many university websites to woo the potential student-applicant, curriculum flexibility is not associated with higher student satisfaction. Instead, it is an organisational trait associated with (past) wealth that is actively marketed. Considering the financial constraints under which (smaller) universities operate, and more specifically the tenuous position of Political Science programmes, we caution against emulating the flexible curricula employed by higher ranking institutions. It is not the silver bullet many may be looking for.

31 Comments

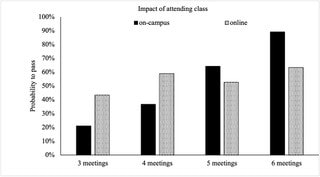

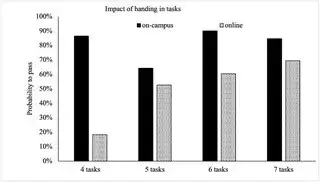

Co-authored with Arjan Schakel, originally published by Active Learning in Political Science on 10 February 2021. Students and staff are experiencing challenging times, but, as Winston Churchill famously said, “never let a good crisis go to waste”. Patrick recently led a new undergraduate course on academic research at Maastricht University (read more about the course here). Due to COVID-19 students could choose whether they preferred online or on-campus teaching, which resulted in 10 online groups and 11 on-campus groups. We were presented with an opportunity to compare the performance of students who took the very same course, but did so either on-campus or online. Our key lesson: particularly focus on online students and their learning. In exploring this topic, we build on our previous research on the importance of attendance in problem-based learning, which suggests that students’ attendance may have an effect on students’ achievements independent fromstudents’ characteristics (i.e. teaching and teachers matter, something that has also been suggested by other scholars). We created an anonymised dataset consisting of students’ attendance, the number of intermediate small research and writing tasks that they had handed in, students’ membership of an on-campus or online group, and, of course, their final course grade. The latter consisted of a short research proposal graded Fail, Pass or Excellent. 316 international students took the course, of which 169 (53%) took the course online and 147 (47%) on-campus. 255 submitted a research proposal, of which 75% passed. One of the reasons why students did so well – normal passing rates are about 65% – might be that, given that this was a new course, the example final exam that they were given was one written by the course coordinator. Bolkan and Goodboy suggest that students tend to copy examples, so providing them may therefore not necessarily be a good thing. Yet students had also done well in previous courses, with the cohort seemingly being very motivated to do well despite the circumstances. But on closer look it’s very telling that 31% of the online students (52 out of 169) did not receive a grade, i.e. they did not submit a research proposal. This was 9.5% for the on-campus students (14 out of 147)[1]. Perhaps this is the result of self-selection, with motivated students having opted for on-campus teaching. Anyhow, it is clear that online teaching impacts on study progress and enhancing participation in examination among online students needs to be prioritised by programme directors and course leaders. We focus on students that at least attended one meeting (maximum 6) and handed-in at least one assignment (maximum of 7). Out of these 239 students, 109 were online students (46%) and 130 on-campus (54%). Interestingly, on average these 239 students behaved quite similarly across the online and on-campus groups, they attended on average 5 meetings (online: 4.9; on-campus: 5.3) and they handed-in an average of 5 to 6 tasks (online: 5.0; on-campus: 5.9). We ran a logit model with a simply dummy variable as the dependent variable which taps whether a student passed for the course. As independent variables we included the total number of attended meetings and the total number of tasks that were handed-in. Both variables were interacted with a dummy variable that tracked whether students follow online or offline teaching and we clustered standard errors by 21 tutor groups. Unfortunately, we could not include control variables such age, gender, nationality and country of pre-education. This would have helped to rule out alternative explanations and to get more insight into what factors drive differences in performance between online and offline students. For example, international students may have been more likely to opt for online teaching and may have been confronted with time-zone differences, language issues, or other problems. Figure 1 displays the impact of attending class on the probability to pass for the final research proposal. The predicted probabilities are calculated for an average student that handed-in 5 tasks. Our first main finding is that attendance did not matter for online students, but it did for on-campus students. The differences in predicted probabilities for attending 3, 4, 5, or 6 meetings are not statistically significant (at the 95% confidence level) for online students but they are for on-campus students. Students who attended the maximum of six on-campus meetings had a 68% higher probability to pass compared to a student who attended 3 meetings (89% versus 21%) and a 52% higher probability to pass compared to a student who attended 4 meetings (89% versus 37%). Figure 2 displays the impact of handing-in tasks on the probability to pass for the final research proposal. The predicted probabilities are calculated for an average student that attended 5 online or on-campus meetings. Our second main finding is that handing-in tasks did not matter for on-campus students, but it did for online students. The differences in predicted probabilities for handing-in 4, 5, 6, or 7 tasks are not statistically significant (at the 95% confidence level) for on-campus students but they are for online students. Students who handed-in the maximum of seven tasks had a 51% higher probability to pass compared to a student who handed in four tasks (69% versus 18%) and a 16% higher probability to pass compared to a student who handed-in five tasks (69% versus 53%).

Note that we do not think that attendance does not matter for online students or that handing-in tasks does not matter for offline students. Our dataset does not include a sufficient number of students to expose these impacts. From our previous research we know that in general we can isolate the impact of various aspects of course design with data from three cohorts (around 900 students). The very fact that we find remarkably clear-cut impacts of attendance among on-campus students and of handing-in tasks for online students for a relatively small number of students (less than 240) reveals that these impacts are so strong that they surface and become statistically significant in such a small dataset as ours. This is why we feel confident to advise programme directors and course leaders to focus on online students. As Alexandra Mihai also recently wrote, it is worth investing time and energy in enhancing online students participation in final examinations and to offer them many different small assignments to be handed-in during the whole time span of the course. This is not to say that no attention should be given to on-campus students and their participation in meetings but, given limited resources and the amount of gain to be achieved among online students, we think it would be wise to first focus on online students. [1] The difference of 21% in no grades between online and offline students is statistically significant at the 99%-level (t = 4.78, p < 0.000, N = 314 students). Written with Afke Groen and previously published by Active Learning in Political Science on 13 February 2020. We are going to be honest with you from the outset: this blog is not concerned with our teaching experience, but rather with an ongoing research project that we are working on with our colleague Johan Adriaensen and our student assistant Caterina Pozzi (both also Maastricht University). And it gets worse: this is a blog that ends with a cry for help.

We are working on a research project studying undergraduate curriculum design in European Studies, International Relations and Political Science. Surprisingly, there is relatively little research on actual curriculum design within the Scholarship of Teaching and Learning, in particular when it comes to such broad fields. Sure, there has been a debate about what curriculums in these fields should look like. Some of our colleagues have, for instance, asked whether there is, or should be, such a thing as a core curriculum in European Studies, while others have looked at interdisciplinarity in the field of Politics. Similarly, at the policy level there have been some attempts to flesh out benchmarks and standards in European Studies, and International Relations and Politics. But what is missing is a thorough attempt to build a database of programmes in European Studies, International Relations and Politics, and to compare the characteristics of these programmes. This is where our ongoing research project comes in. The project builds on previous work by Johan and us, published in the Journal of Contemporary European Studies and European Political Science (in production). Both articles concern the training and monitoring of generic skills in active learning environments. Our new project takes a broader perspective on skills and methods in curriculum design. We conduct a meta-study of undergraduate programmes offered by the member institutions of APSA, ECPR and UACES. We particularly explore three key themes: (1) the teaching of skills, practical experience and employability; (2) the degree of interdisciplinarity; and (3) the flexibility and coherence of the programme. All in all, we hope to provide (1) a unique and comprehensive database of how curricula are organised in practice. On this basis, (2) we will distinguish various types of curriculums and evaluate their advantages and disadvantages. Our final objective is to (3) formulate best practices for university teachers and programme developers. As such, the database also promises to be a useful resource for university policies, in particular in light of challenges such as the constantly changing objects of study in European Studies, International Relations and Politics and an increasingly diverse and international student body. Although we are still in the phase of gathering data, we can already share a couple of interesting observations with you. For one, while some universitiesseem to think that programmes in European Studies, Politics and International Relations are no longer really necessary, it is good to see that this has certainly not meant that future students cannot choose from a wide array of such programmes. Indeed, the curriculums that we have coded so far look quite different. For instance, our own BA in European Studies seems to pay much more specific attention to methods and skills development through separate courses (and many of them). Another striking difference between programmes, is the extent of choice offered to students; while some programmes consist of large, compulsory courses mostly, others include a wide array of electives or ‘tracks’ from diverse fields of studies (sometimes with over 100 or even 200 optional courses!). The latter is also one of our main challenges: it is not always clear what exactly constitutes a programme’s curriculum. Often, the respective websites are not very clear – generally university websites are rather dense – and it is impossible to find core programme documents that might help us here. This is particularly the case for Eastern European and US programmes, which often revolve around a major/minor set-up. Hence, we need your help! If you are based at a university and/or are teaching in a programme that is a member of APSA, ECPR and UACES, your input would be very welcome. If there is any documentation that you think might help us code Eastern European and US programmes, we would be very grateful if you could send it to [email protected]. We do offer something in return. First, we will keep you posted through Twitter and blogs. Second, we hope to organise panels and workshops on curriculum design at conferences, such as during this year’s European Teaching & Learning Conference in Amsterdam. If you would like to contribute to such get-togethers, do let us know. Finally, our aim is to eventually provide colleagues with access to our database, starting with those of you who help us move the project forward! |

Archives

December 2023

Categories

All

|

RSS Feed

RSS Feed