|

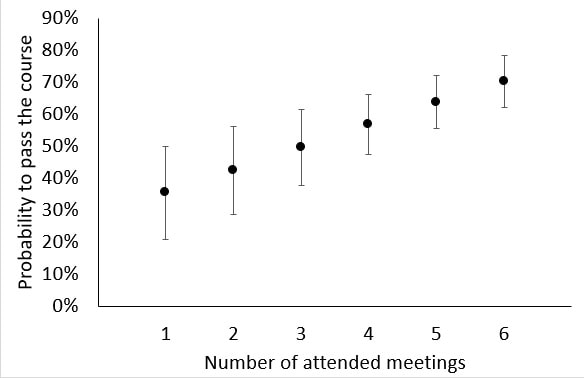

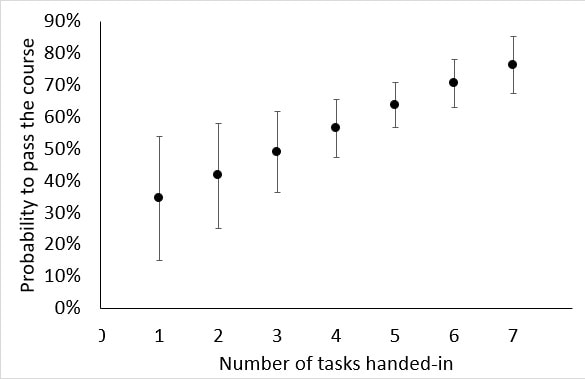

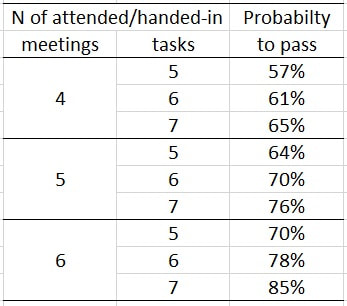

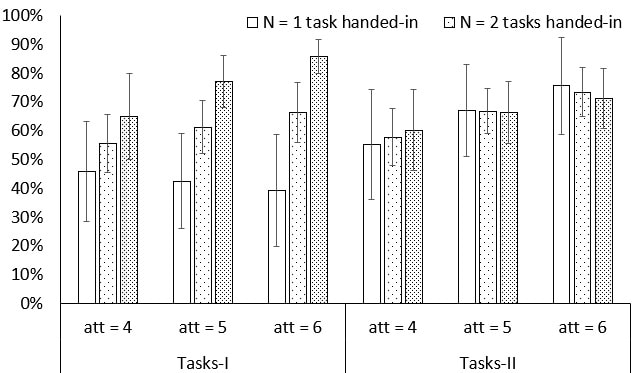

Co-authored with Arjan H. Schakel, originally published by Active Learning in Political Science on 25 March 2022. In one of his recent contributions to this blog, Chad asks why students should attend class. In his experience "[C]lass attendance and academic performance are positively correlated for the undergraduate population that I teach. But I can’t say that the former causes the latter given all of the confounding variables." The question whether attendance matters often pops up, reflected in blog posts, such as those by Chad and by Patrick’s colleague Merijn Chamon, and in recent research articles on the appropriateness of mandatory attendance and on student drop-out. In our own research we present strong evidence that attendance in a Problem-Based Learning (PBL) environment matters, also for the best students, and that attending or not attending class also has an influence on whether international classroom exchanges benefit student learning. Last year we reported on an accidental experiment in one of Patrick’s courses that allowed us to compare the impact of attendance and the submissions of tasks in online and on-campus groups in Maastricht University’s Bachelor in European Studies. We observed that that attendance appeared to matter more for the on-campus students, whereas handing in tasks was important for the online students. This year the same course was fully taught on-campus again, although students were allowed to join online when they displayed symptoms of or had tested positive for Covid-19 (this ad-hoc online participation was, unfortunately, not tracked). We did the same research again and there are some notable conclusions to be drawn. In the first-year BA course that we looked at, students learn how to write a research proposal (see here). The course is set up as a PBL course, so it does not come as a big surprise that attendance once again significantly impacted students’ chances of passing the course. Figure 1 displays the impact of the number of attended meetings on the probability that a student will pass for the course. Not surprisingly, the impact of attendance is large, a student who attends only one meeting is quite certain to fail (35% to pass) whereas a student who attends all meetings is quite certain to pass (70%). Notes: Shown are the predicted probabilities and their 95% confidence intervals. The results are based on a logit model whereby 175 students are clustered by 18 tutor groups and that includes the attended number of meetings and the number of tasks that were handed-in and their interaction. All the differences between the predicted probabilities are statistically significantly different from each (p < 0.01). Figure 2 displays the impact of the number of tasks that are handed-in on the probability to pass for the course. The impact of the number of handed-in tasks is also large, a student who hands in only one task is quite certain to fail (34% to pass) whereas a student who hands-in all tasks is quite certain to pass (76%). Comparing the impacts of attendance and handing in assignments we observe that attendance matters as much as handing in assignments, but a significant interaction effect signals that both strengthen each other. In Table 1 we display the impact of attendance and handing-in tasks on the probability to pass for the course. Most students (112/175 = 64%) attended 4 to 6 meetings and handed-in 5 to 7 tasks. Hence, we zoom in on these students to disentangle the separate impact of attendance and tasks handed-in. Notes: Shown are the predicted probabilities and their 95% confidence intervals. The results are based on a logit model that includes an interaction effect between the attended number of meetings and the number of tasks that were handed-in and whereby 175 students are clustered by 18 tutor groups. All the differences between the predicted probabilities are statistically significantly different from each (p < 0.05; except for when the number of attended meetings is 4: p < 0.10). The differences between predicted probabilities for 5 and 7 handed-in tasks ranges between 8% when a student attended 4 meetings to 15% when a student attended 6 meetings. This impact is significant but also a bit smaller than the impact of attendance. The differences between predicted probabilities for 4 and 6 attended meetings ranges between 13% when a student handed-in 5 tasks to 20% when a student handed-in 7 tasks. An important take-away message from Table 1 is that attendance and handing-in tasks reinforce each other. That is, the impact of attendance is larger when a student hands-in more tasks (i.e. from 8% to 15% is 7% increase), and the impact of handed-in tasks is larger for students who attend more meetings (i.e. from 13% to 20% is 7% increase). Notes: Shown are predicted probabilities and their 95% confidence intervals. The results are based on a logit model whereby 175 students are clustered by 18 tutor groups. The model includes the attended number of meetings (att) and the number of tasks type I and tasks type II and their interactions. All the differences between the predicted probabilities are statistically significantly different from each other for tasks type-I when a student attends 5 or 6 meetings (p < 0.01). None of the differences between the predicted probabilities are statistically significant for tasks type II. We further explore the impact of handing-in tasks by looking at the impact of the type of tasks (Figure 3). The first group concerns general writing tasks that were specifically discussed in class, but students didn’t receive written feedback from tutors (tasks type I). The second group concerns writing tasks that directly prepared for the final course research proposal. These tasks were not specifically discussed in class, but students receive extensive written feedback from tutors (tasks type II).

Whereas one may expect that tasks type II mattered most given that they prepare for the final exam, we actually find that their effect was negligible. At the same time, handing in task type I assignments – those discussed in class, without written feedback – did have a positive effect on chances of passing the course. We explain this striking result by one of the core elements of PBL, namely effective learning occurs through collaboration. While discussing a wide range of students’ assignments in class (tasks type I) students do not only learn and reflect on their own assignment but also from those of their fellow students. This increases their understanding of what is good academic writing and what is not. These striking results also raise interesting questions regarding writing assignments, staff feedback and workload and how these issues should be dealt with in an active learning environment such as PBL. Perhaps writing assignments – in different forms – can be integrated more into class discussions, decreasing the workload that normally comes with giving feedback on individual writing assignments?

0 Comments

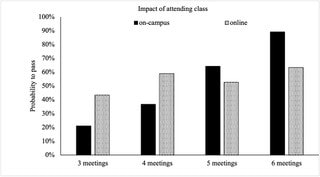

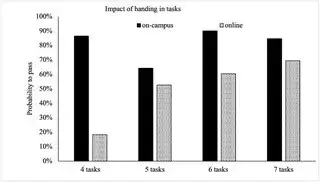

Co-authored with Arjan Schakel, originally published by Active Learning in Political Science on 10 February 2021. Students and staff are experiencing challenging times, but, as Winston Churchill famously said, “never let a good crisis go to waste”. Patrick recently led a new undergraduate course on academic research at Maastricht University (read more about the course here). Due to COVID-19 students could choose whether they preferred online or on-campus teaching, which resulted in 10 online groups and 11 on-campus groups. We were presented with an opportunity to compare the performance of students who took the very same course, but did so either on-campus or online. Our key lesson: particularly focus on online students and their learning. In exploring this topic, we build on our previous research on the importance of attendance in problem-based learning, which suggests that students’ attendance may have an effect on students’ achievements independent fromstudents’ characteristics (i.e. teaching and teachers matter, something that has also been suggested by other scholars). We created an anonymised dataset consisting of students’ attendance, the number of intermediate small research and writing tasks that they had handed in, students’ membership of an on-campus or online group, and, of course, their final course grade. The latter consisted of a short research proposal graded Fail, Pass or Excellent. 316 international students took the course, of which 169 (53%) took the course online and 147 (47%) on-campus. 255 submitted a research proposal, of which 75% passed. One of the reasons why students did so well – normal passing rates are about 65% – might be that, given that this was a new course, the example final exam that they were given was one written by the course coordinator. Bolkan and Goodboy suggest that students tend to copy examples, so providing them may therefore not necessarily be a good thing. Yet students had also done well in previous courses, with the cohort seemingly being very motivated to do well despite the circumstances. But on closer look it’s very telling that 31% of the online students (52 out of 169) did not receive a grade, i.e. they did not submit a research proposal. This was 9.5% for the on-campus students (14 out of 147)[1]. Perhaps this is the result of self-selection, with motivated students having opted for on-campus teaching. Anyhow, it is clear that online teaching impacts on study progress and enhancing participation in examination among online students needs to be prioritised by programme directors and course leaders. We focus on students that at least attended one meeting (maximum 6) and handed-in at least one assignment (maximum of 7). Out of these 239 students, 109 were online students (46%) and 130 on-campus (54%). Interestingly, on average these 239 students behaved quite similarly across the online and on-campus groups, they attended on average 5 meetings (online: 4.9; on-campus: 5.3) and they handed-in an average of 5 to 6 tasks (online: 5.0; on-campus: 5.9). We ran a logit model with a simply dummy variable as the dependent variable which taps whether a student passed for the course. As independent variables we included the total number of attended meetings and the total number of tasks that were handed-in. Both variables were interacted with a dummy variable that tracked whether students follow online or offline teaching and we clustered standard errors by 21 tutor groups. Unfortunately, we could not include control variables such age, gender, nationality and country of pre-education. This would have helped to rule out alternative explanations and to get more insight into what factors drive differences in performance between online and offline students. For example, international students may have been more likely to opt for online teaching and may have been confronted with time-zone differences, language issues, or other problems. Figure 1 displays the impact of attending class on the probability to pass for the final research proposal. The predicted probabilities are calculated for an average student that handed-in 5 tasks. Our first main finding is that attendance did not matter for online students, but it did for on-campus students. The differences in predicted probabilities for attending 3, 4, 5, or 6 meetings are not statistically significant (at the 95% confidence level) for online students but they are for on-campus students. Students who attended the maximum of six on-campus meetings had a 68% higher probability to pass compared to a student who attended 3 meetings (89% versus 21%) and a 52% higher probability to pass compared to a student who attended 4 meetings (89% versus 37%). Figure 2 displays the impact of handing-in tasks on the probability to pass for the final research proposal. The predicted probabilities are calculated for an average student that attended 5 online or on-campus meetings. Our second main finding is that handing-in tasks did not matter for on-campus students, but it did for online students. The differences in predicted probabilities for handing-in 4, 5, 6, or 7 tasks are not statistically significant (at the 95% confidence level) for on-campus students but they are for online students. Students who handed-in the maximum of seven tasks had a 51% higher probability to pass compared to a student who handed in four tasks (69% versus 18%) and a 16% higher probability to pass compared to a student who handed-in five tasks (69% versus 53%).

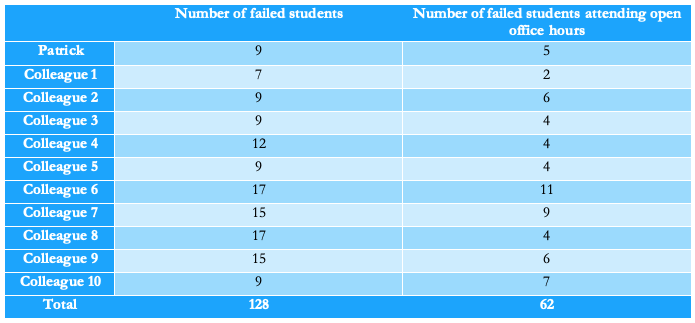

Note that we do not think that attendance does not matter for online students or that handing-in tasks does not matter for offline students. Our dataset does not include a sufficient number of students to expose these impacts. From our previous research we know that in general we can isolate the impact of various aspects of course design with data from three cohorts (around 900 students). The very fact that we find remarkably clear-cut impacts of attendance among on-campus students and of handing-in tasks for online students for a relatively small number of students (less than 240) reveals that these impacts are so strong that they surface and become statistically significant in such a small dataset as ours. This is why we feel confident to advise programme directors and course leaders to focus on online students. As Alexandra Mihai also recently wrote, it is worth investing time and energy in enhancing online students participation in final examinations and to offer them many different small assignments to be handed-in during the whole time span of the course. This is not to say that no attention should be given to on-campus students and their participation in meetings but, given limited resources and the amount of gain to be achieved among online students, we think it would be wise to first focus on online students. [1] The difference of 21% in no grades between online and offline students is statistically significant at the 99%-level (t = 4.78, p < 0.000, N = 314 students). This post was originally published by Active Learning in Political Science on 6 December 2019.  I’ve always considered myself an approachable teacher; someone students can come to with questions or worries or just for a talk. And from what I hear, I amconsidered to be approachable. Still, I am noticing something that worries me. I have been having open office for about 9 years now, but fewer students have been showing up. Weeks go by when no one comes, even in periods when I am teaching and coordinating courses. I know that I am not the first one raising this issue. It is even the topic of students’ research! But I still believe that students can learn from meeting with us for input and feedback, whether this concerns a relatively simple question or my assessment of their paper. So, why does no one come and talk to me anymore? Turnout during open office hours again was low during the first weeks of this year, when I coordinated and taught a first-year course on academic research and writing. At the end, students write a short paper. These are randomly distributed among teaching staff, myself plus 10 other colleagues – together we teach 25 problem-based learning groups of about 12 students. As soon as results are out, all students, whether they have failed or passed, are invited to meet with the person who marked their paper to discuss the assessment during scheduled open office hours. This year I asked colleagues to inform me about the number of students that had shown up. The table below shows the data for those who failed the course. Interestingly one colleague had to do her open office hours via Skype; no less than 7 out of 9 students showed up. Yet, there is some research that suggests that using technology does not make a huge difference. Why did so few students show up?

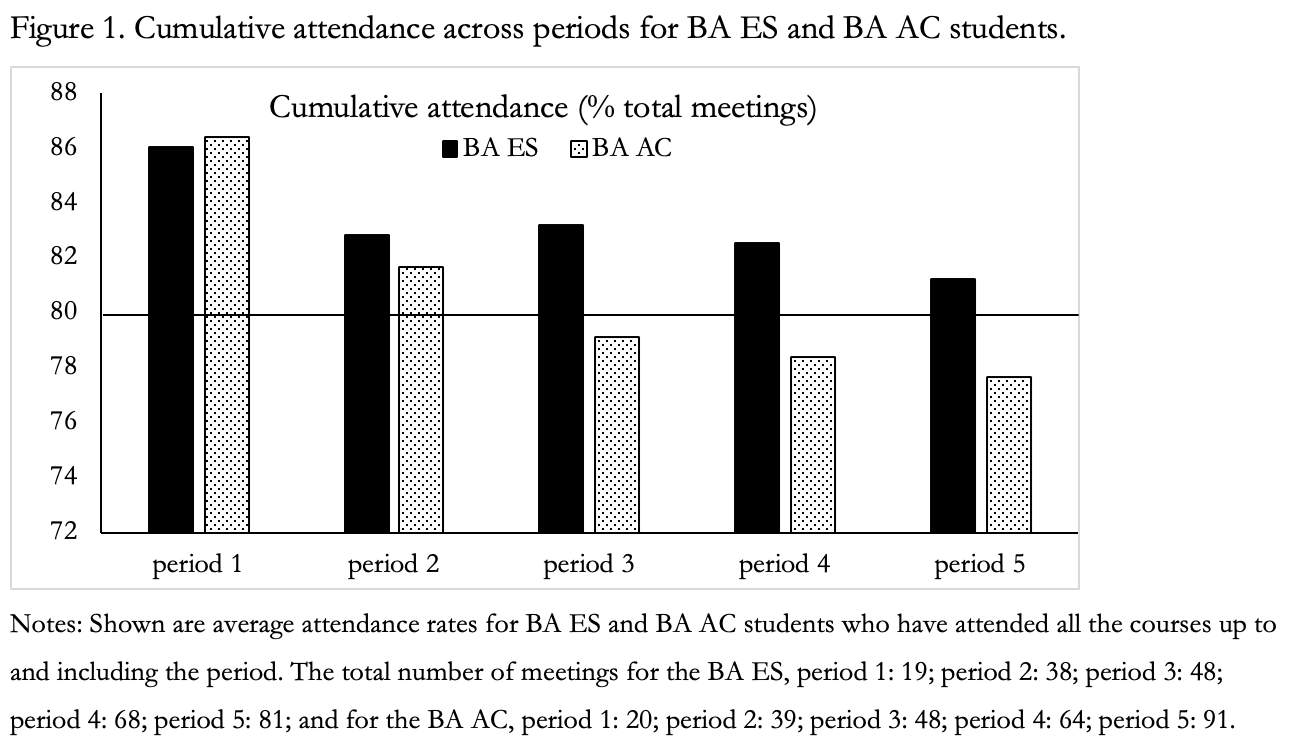

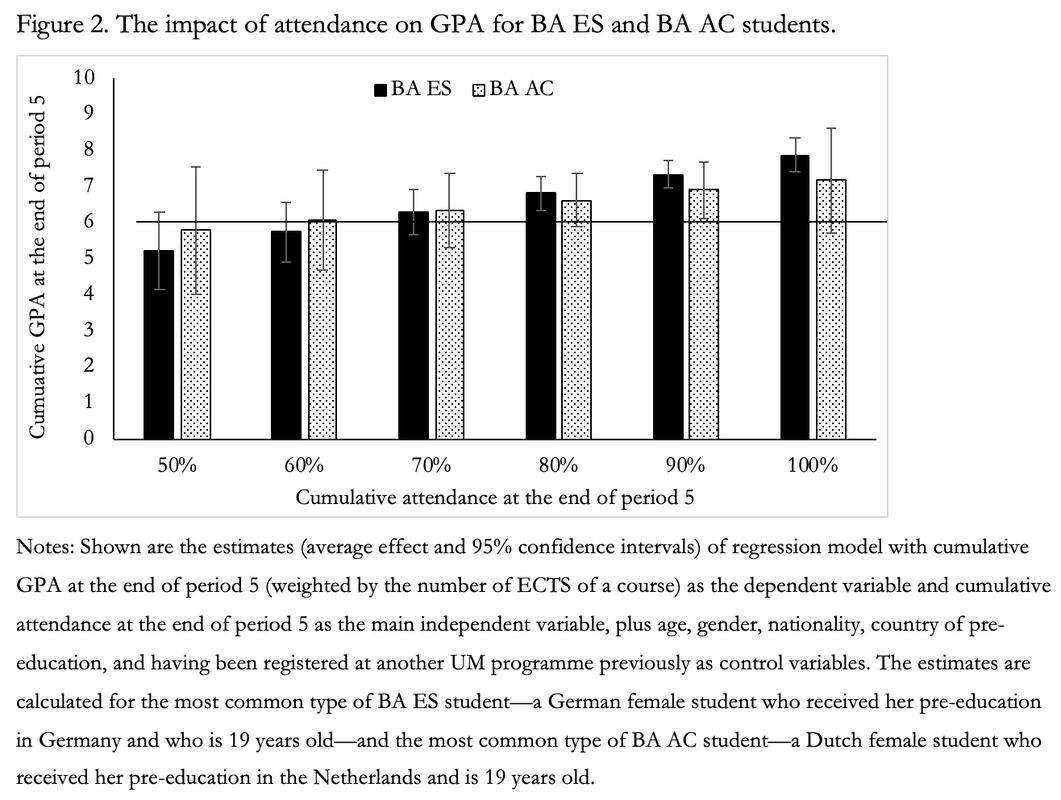

I decided to ask some simple questions to the students themselves during a session in our mentor programme. The approximately 100 students who attended (out of nearly 300) might not be representative of the group of students that does not turn up in my office. But I still learned something interesting. Of the 86 students completing questions via an online survey tool, 36 had failed the course and 29 had attended the open office hours. Those who attended, generally did so to get clarification regarding their paper’s assessment. Of those who did not attend, some simply stated that they passed the course and saw no need to discuss the feedback. Others referred to having been sick, stressed and/or busy with the new courses – when asked, quite a few of these students did not write to staff to ask for another appointment. Asked why they thought others had not come, some answered that these must be lazy students or that they missed motivation because they knew what they had done wrong. But quite a few answers touched upon something that we might all too easily overlook, namely students’ expectations regarding feedback opportunities. These answers did not just concern not knowing what to do with feedback. For instance, one student wrote that students who did not show up might be “insecure and/or uncomfortable with getting feedback”. Another student wrote that “you have limited time with the tutors and tutors often have a lot of work and not much time for you”. Could it be that low attendance during open office hours is due to barriers to students’ engagement with feedback or, more generally, a lack of feedback literacy? This is something that I want to explore in more detail. I have already briefly discussed this with our academic writing advisor, and we may want to see whether we can specifically address this issue in a forthcoming curriculum review. But what about solutions for the here and now? There are many ways in which open office are organised, but what works best? One colleague suggested changing times. Admittedly, my open office hours are Wednesdays from 08:30-09:30, but this never was a problem – and the feedback open office hours during the aforementioned course were scheduled in the afternoon. Elsewhere in cyberspace people have been suggesting other solutions, including a rethink of faculty office space. I’d love to squeeze in a couch, but my office is rather tiny. On Twitter someone suggested that the wording ‘open office hours’ is unclear to students and that ‘student drop-in hours’ may make more sense. So, the name plate next to my door now mentions my student drop-in hours and so does the syllabus of an upcoming course. Let’s see what happens. I hope students will come and talk to me again. The door’s open, simply turn up at the stated time! This post was co-authored by Arjan Schakel (University of Bergen) and originally published by the FASoS Teaching & Learning Blog on 30 September 2019. The abolishment of minimum attendance requirements at FASoS just over two years ago has been a recurring topic of discussion. Literature on students’ persistence and results often highlights attendance as important, because absenteeism would increase the risk of dropout. Intuitively, one would expect attendance to be even more important in programmes with an active learning environment, such as PBL. Research finds that active learning environments have a positive effect on students’ study success, yet few studies have looked at the importance of (non-)attendance in such learning environments. In 2018, we published an article in Higher Education that addresses this gap. In this blog, we provide new data to contextualise the discussions on attendance, and present options for further research and refinement of the faculty’s attendance policy. Compulsory attendance and study success In our 2018 article, we investigated the effect of course (non-)attendance on study success of three BA ES cohorts (2012/2013, 2013/2014, 2014/2015). We looked at two forms of study success: retention, namely differences in attendance between students who passed the threshold of 42 ECTS of the binding study advice (BSA) and those who did not; and grades, namely the effect of attendance on students’ grade point average (GPA). We divided the 1059 students enrolled at the start of the three years in three sub-groups: (1) 650 students who attended all courses; (2) 548 students who also passed the BSA threshold; (3) 326 students who also attended the minimum number of required meetings at the end of the year. Controlling for a range of factors, including gender, age, nationality, pre-education and GPA of the previous period, we found that attendance has a clear additive impact beyond “active engagement” or “commitment to PBL”. Doesn’t this depend on the nature of PBL or on students’ overall commitment? Could certain rules, like minimum attendance requirements, stimulate desired behaviour? Or could the results be due to some level of endogeneity, given that the best-performing students tend to attend more meetings? Our data enabled us to differentiate within the group of students. Even among the committed students – those who met the minimum attendance requirements in all courses – we found that higher attendance has a substantial impact on the amount of ECTS obtained and the end-of-year GPA. Non-compulsory attendance and study success We have continued to collect data on attendance and study success since the abolishment of minimum attendance requirements, superbly supported by the exam office. Below we present data for all first-year BA ES and BA AC students in the academic year 2018/2019. Figure 1 shows cumulative attendance of students for period 1 until period 5. BA ES students attend more than 80% of tutorials, while BA AC students attend just below 80% after period 2, 79% in period 3, and 78% in periods 4 and 5. But overall, attendance among FASoS students is quite good. However, the number of students who miss one or more courses increases dramatically for BA AC students. Figure 1 displays cumulative attendance for those students who attended allcourses. Yet, whereas of the 276 BA ES who started in period one, 227 students (82%) had attended all courses by the end of period 5, of the 103 BA AC only 42 students (41%!) had done so. We believe that the low cumulative attendance of BA AC students is worrisome, because our research clearly reveals that attendance is strongly associated with GPA. Figure 2 displays the impact of cumulative attendance at the end of period 5 on the GPA at the end of the year. Figure 2 shows that students who attend 80% or more of the total meetings receive a cumulative GPA above the passing grade of 6.0. The whiskers indicate the 95 confidence intervals around the average, meaning that we are pretty sure (95% confident) that the estimate lies within the boundaries of the whiskers. The lower bounds of the whiskers do not cross the 6.0 line when cumulative attendance surpasses 80%, except for BA AC students, as the number of students on which the estimates are based is quite low: 25 instead of 143 for the BA ES.

Final thoughts Our new results strongly indicate that FASoS should strive for at least 80% attendance among students. As Gump writes “[s]tudents who wish to succeed academically should attend class, and instructors should likewise encourage class attendance”. We do not claim that attendance per se has an impact on study success, because our findings may very well be driven by intrinsically motivated, well-prepared, and therefore well-performing students who also attend more tutorials. Can we stimulate students to attend without resorting to external incentives such as obligatory attendance? Other policies are possible, including incentive schemes and showing students how (non-)attendance affects their results. During the past two years we used the latter in the BA ES, presenting attendance data during meetings of the mentor programme. However, this data was not always available. Pursuing this policy would require faculty commitment to rigorous data collection and analysis. In addition, it is not just attendance that matters in PBL, but also preparation and participation. Since FASoS data is administrative in nature, we cannot reflect on these and other factors, including motivation, self-efficacy and whether or not we sufficiently tap into students’ intrinsic motivation to attend tutorials. The faculty should therefore also commit to a thorough qualitative study regarding students’ perspectives on the importance of attendance. |

Archives

December 2023

Categories

All

|

RSS Feed

RSS Feed